Understanding the Re-Setting of Cut Scores in Mississippi’s Accountability System, Part 2

By Rachel Canter

This blog post is Part 2. Read Part 1 here: https://www.mississippifirst.org/blog/understanding-re-setting-cut-scores-mississippis-accountability-system-part-1/.

Setting Cut Scores

The process of setting cut scores is in part technical and in part political. First, staff at the Mississippi Department of Education (MDE) compile all the scores from schools and districts and rank each from highest to lowest. (Schools must be grouped according to how many points each is eligible for before doing this so that a school that can earn 1,000 points is not compared against a school that can only earn 700.) Once this ranking is complete, MDE staff and technical advisors must use professional judgement to determine what should constitute an “A” or an “F.”

Using these guidelines, they run several trials using different cut scores to see how it may affect the whole picture and how consistent the results from those cut scores are with the guidelines. Once they are satisfied with the results, they make a recommendation to the Commission on School Accreditation, which must then make a recommendation to the State Board of Education (although the Department can make its own recommendation to the State Board that does not align with the Commission’s). The State Board of Education makes the final decision on the cut scores and approves grades.

When MDE went through this process last year (2016), final accountability data (the number of points schools or districts earned) was not yet available, so the Department had to use percentiles (districts at or above 63% of all districts but below 90% of all districts would earn a “B,” for example) instead of actual numerical cut scores (626 points) to run all of its trials. The State Board approved the percentiles and then later approved the actual cut scores once the data was finalized. The percentages that the State Board approved for districts were as follows:

| Grade | Percentiles |

| A | 90% or above |

| B | 63% or above but below 90% |

| C | 38% or above but below 63% |

| D | 14% or above but below 38% |

| F | Below 14% |

Because MDE used percentiles to set the cut scores, some stakeholders claimed that the lowest 14% of schools would be destined to fail every year. This claim caused so much controversy that MDE had to release a statement promising that numerical cut scores, not percentiles, would be used going forward. The debate now is that MDE has recommended re-doing this process of setting the cut scores, which means a return to percentiles for one more year—and a guarantee that a certain percentage of districts will earn an “F.” The next post explains why MDE has recommended re-doing the cut scores.

Rationale MDE Provided for Changing the Cut Scores

To determine 2015-2016 accountability scores, MDE had to figure out how to calculate growth when the state used a different test in 2015-2016 (MAP) than in 2014-2015 (PARCC). MDE’s solution was to use a statistical “equating process” to figure out whether a score on MAP was the same or different than a score on PARCC. This allowed MDE to assign growth scores to schools and districts.

This year (2016-2017) is the first time in four years that Mississippi has taken the same test two years in a row. Calculating growth using the same test is easy because all MDE has to do is note whether a child moved up a performance level (or crossed the mid-point of the lowest levels) from last year to this year.

MDE noticed something odd about the accountability data after it was calculated this year, though. Students scored higher in nearly every grade and subject this year, but growth scores were lower. Staff determined this was likely due to the way that they calculated growth last year: in other words, the “equating process” from 2015-2016 overestimated growth for many students.

A year of lower growth data when student scores actually improved is frustrating, but the problem is that the cut scores that determine grades were based in part on those inflated growth scores. This left MDE with a dilemma. Should they re-set the cut scores, even though they promised last year that they would not change the targets for several years? What is more important—more realistic cut scores or stability in the system? MDE decided changing the scores was worth it. (See the board agenda item: https://mdek12.org/sites/default/files/documents/MBE/MBE%20-%202017%20(8)/tab-05-oa_reestablished-cut-scores_v1.pdf.)

Here’s the heart of the matter: who benefits from a change in cut scores? A-rated districts? F-rated districts? All districts? None of the districts? Schools and districts have been working hard for a full year believing that they need to exceed the 2015-2016 cut scores if they want a better grade. This is particularly true of schools and districts that earned an “F” last year. If changing the cut scores helps all districts, most people would not bat an eye, even though the goalposts have moved. If changing the cut scores helps some schools and districts—A-rated districts—and not others—F-rated districts—how is the change fair?

The next post explains the various proposals made, the proposal adopted by the State Board, and the implications of that decision.

Related Posts

By Grace Breazeale | Director of Research and K-12 Policy This week, the National Center for Education Statistics released 2024 National Assessment of Education Progress (NAEP) assessment results, commonly referred to as the “Nation’s Report Card.” The assessment is administered across the nation every two years in grades 4 and 8. Unlike state-specific standardized tests, the NAEP […]

Editor’s Note: This is the second part of a two-part series on third grade literacy achievement. You can find the first part here. *** By Grace Breazeale | Director of Research and K-12 Policy In the first part of this series, we explored the effectiveness of the Literacy-Based Promotion Act (LBPA) across Mississippi school districts, as well […]

Editor’s Note: This is the first part of a two-part series on the Literacy-Based Promotion Act. *** By Grace Breazeale | Director of Research and K-12 Policy Between 2013 and 2019, fourth grade reading scores on the nationwide NAEP test increased by ten points in Mississippi–a larger increase than in any other state during this time […]

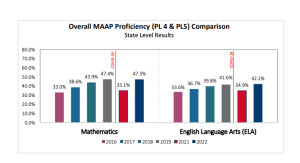

By Toren Ballard For the second year in a row, results from the Mississippi Academic Assessment Program (MAAP) exams suggest that Mississippi is continuing to defy nationwide trends in educational achievement: proficiency levels across all subjects were at all-time highs in 2023, and Mississippi is now one of just two states nationwide to have exceeded its pre-pandemic proficiency […]

Editor’s Note: This blog post is in response to a column appearing in the LA Times titled “How Mississippi gamed its national reading test scores to produce ‘miracle gains.‘” We’re not going to link to it because we don’t believe it is worth your clicks; however, feel free to Google. *** This morning, I was […]

Editor’s Note: This blog post is part of an ongoing series of posts dedicated to K-12 education policy in Mississippi. *** By Grace Breazeale | Director of Research and K-12 Policy Mississippi’s high school graduation rate has steadily risen over the past five years, which would typically be a cause for celebration. But in this case, […]

Editor’s Note: This blog post is part of an ongoing series of posts dedicated to K-12 education policy in Mississippi. *** By Grace Breazeale | Director of Research and K-12 Policy In early 2021, the Mississippi Department of Education released its first-ever social-emotional learning (SEL) standards. The release of these standards was a positive step towards increasing […]

By Toren Ballard This fall, Mississippians received two widely divergent narratives about the state of public education post-COVID: On September 29, the Mississippi Department of Education (MDE) released school and district accountability grades for the 2021-2022 school year, showing unprecedented improvement: 87% of districts were rated “C” or higher, up from 70% in 2019, the last time MDE […]

By Rachel Canter | Executive Director When I was a first year teacher, I asked my students a basic question about American history—“Why do we celebrate Dr. Martin Luther King, Jr.’s birthday every year?”—as a way of introducing Dr. King’s “I Have a Dream” speech. Without exception, the answer I got from every class was that […]

Today, we released a statement on American history from our Executive Director, Rachel Canter. In partnership with that statement, Mississippi First has developed the following principles for assessing curriculum-related legislation. These principles are based on our organizational values. We believe these principles are commonsense. In a recent nationwide poll of parents, large majorities of both Republicans and Democrats agreed […]

By Rachel Canter Yesterday, we did a thread to explain school grades. Here’s some additional information about growth because it is the hardest part to understand. Usually, when schoolwide proficiency goes up, growth will go up as well, BUT it is possible to have higher proficiency scores schoolwide from one year to the next and still have a […]

By Rachel Canter School grades came out unofficially yesterday, and we’re already seeing (sometimes uninformed) chatter about what they mean. Here are a few pointers: Our state accountability system is based mostly on how well kids do on their end-of-year tests. The end-of-year tests are given in reading and math to all kids in grades 3-8, […]